What is imbalanced data? Simply explained

Imbalanced data is a common occurrence when working with classification machine learning models. In this post, I explain what imbalanced data is and answer common questions that people have.

Imbalanced data is a common occurrence when working with classification machine learning models. In this post, I explain what imbalanced data is and answer common questions that people have.

What is imbalanced data?

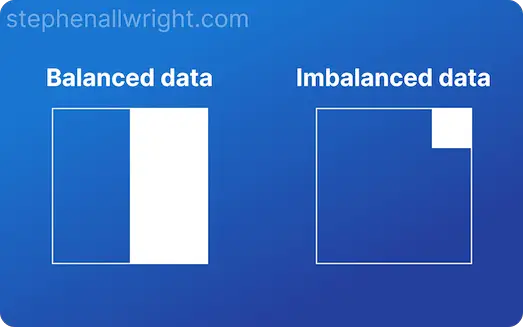

Imbalanced data refers to a situation, primarily in classification machine learning, where one target class represents a significant portion of observations. Imbalanced data frequently occurs in real-world problems, so it’s a situation data scientists often have to deal with.

Imbalanced data example

To demonstrate what an imbalanced dataset looks like, let’s use an example where we are predicting the occurrence of an illness. This is a typical imbalanced problem, where the vast majority of people will not test positive but a small proportion will.

The simplified version of this dataset would look like this:

| Resting heart rate | Age | Illness present |

|---|---|---|

| 100 | 65 | 1 |

| 65 | 60 | 0 |

| 70 | 40 | 0 |

| 50 | 30 | 0 |

| 80 | 35 | 0 |

| 90 | 20 | 0 |

| 55 | 50 | 0 |

| 75 | 45 | 0 |

| 85 | 25 | 0 |

| 60 | 55 | 0 |

We can see that there are 10 observations of which 1 is a positive case, meaning that 10% of our examples are the positive class. This would commonly be classified as an imbalanced dataset.

Why is imbalanced data a problem?

Imbalanced datasets are common, but cause problems in both model training and evaluation. This is because model training and evaluation are commonly run with the assumption that there are an adequate number of observations for each class. This is often not the case when we have an imbalanced dataset, which can cause problems if not adjusted for.

Let’s look at these two areas further.

Is imbalanced data a problem for model training?

An imbalanced dataset can cause issues when training a model, especially when the dataset is small. A model needs many observations of each target class to be able to generalise adequately, so for small datasets there can simply not be enough minority class observations for the model to learn from. This leads to poor performance on both evaluation and scoring datasets.

Is imbalanced data a problem for model evaluation?

Imbalanced data can cause issues in understanding the performance of a model. When evaluating performance on imbalanced data, models that only predict well for the majority class will seem to be highly performant when looking at simple metrics such as accuracy, whilst in actuality, the model is performing poorly.

This means that metric choice becomes even more important in these situations.

How do you know if your data is imbalanced?

There is no fixed definition for when a dataset is considered to be imbalanced or not, but in general an imbalanced dataset occurs when a single class accounts for a significant portion of the dataset. For binary classification, this is often believed to be when over 70% of observations are in one class.

How do you check for imbalanced data?

To check for imbalanced data you should calculate the percentage of observations that fall into each target class, and then see if any of these percentages make up a significant portion of observations. This can be simply done in Python.

Let’s look at how to do this for a Pandas DataFrame.

import pandas as pd

df = pd.DataFrame({"classification_target": [0, 0, 1, 1, 1],"feature": [100,110,200,250,50]})

df["classification_target"].value_counts(normalize=True)

"""

Output:

1 0.6

0 0.4

Name: classification_target, dtype: float64

"""

Here we can see that the positive class makes up 60% of observations and would therefore not be considered to be imbalanced.

Does class imbalance affect model accuracy?

Class imbalance can have a large impact on model accuracy. If the model doesn’t have enough examples then this can make it difficult to train, therefore making the model less accurate. It’s also important to choose the correct metric as well, such that you truly understand how accurate your model is when the data is imbalanced.

Related articles

Imbalanced data

Classification metrics for imbalanced data

Model evaluation

What is a baseline machine learning model?

Using cross_validate in sklearn