MSE vs MAE, which is the better regression metric?

MSE and MAE are machine learning metrics which measure the performance of regression models. They’re both commonly used, so how do you know which is best for your use case?

MSE and MAE are machine learning metrics which measure the performance of regression models. They’re both commonly used, so how do you know which is best for your use case? In this post I explain what they are, their similarities and differences, and help you choose the one which suits your needs.

Are MAE and MSE the same?

MAE and MSE have similar names and the same goal, to measure the error of regression models, but they are not the same. They actually have quite different approaches to measuring the prediction error.

Let’s explore this further by looking at their definitions

What is MAE?

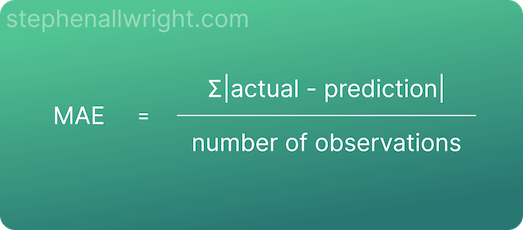

MAE (Mean Absolute Error) is the average absolute error between actual and predicted values.

Absolute error, also known as L1 loss, is a row level error calculation where the non-negative difference between the prediction and the actual is calculated. MAE is the aggregated mean of these errors, which helps us understand the model performance over the whole dataset.

MAE is a popular metric to use as the error value is easily interpreted. This is because the value is in the same scale as the target you are predicting for.

The formula for calculating MAE is:

What is MSE?

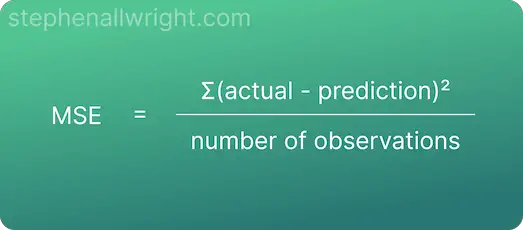

MSE (Mean Squared Error) is the average squared error between actual and predicted values.

Squared error, also known as L2 loss, is a row level error calculation where the difference between the prediction and the actual is squared. MSE is the aggregated mean of these errors, which helps us understand the model performance over the whole dataset.

The main draw for using MSE is that it squares the error, which results in large errors being punished or clearly highlighted. It’s therefore useful when working on models where occasional large errors must be minimised.

The formula for calculating MSE is:

What is the difference between squared error and absolute error?

As we see from the definitions of MAE and MSE, the key difference between them is that MAE uses the absolute error whilst MSE uses the squared error. But what is the difference between these two calculations?

The key difference between squared error and absolute error is that squared error punishes large errors to a greater extent than absolute error, as the errors are squared instead of just calculating the difference.

This distinction can be seen clearly when looking at an example:

| Prediction | Actual | Absolute error | Squared error |

|---|---|---|---|

| 140 | 120 | 20 | 400 |

| 190 | 200 | 10 | 100 |

| 80 | 50 | 30 | 900 |

| 140 | 180 | 40 | 1600 |

| 160 | 90 | 50 | 2500 |

Mean squared error (MSE) = 1100

Mean absolute error (MAE) = 30

As you can see, the larger the error the starker the difference when using squared error in comparison to absolute error. Going from 10 to 50 in absolute error results in a 2400 change in squared error, which would be the desired outcome if your goal is to minimise the occurrence of these large outlier errors.

Implementing MSE and MAE in Python using scikit-learn

To use these metrics for a machine learning project you will need to implement them in Python. Luckily this is simple using the scikit-learn package. Here is a simple example of using them in practice:

from sklearn.metrics import mean_squared_error, mean_absolute_error

actual = [100,120,80,110]

predicted = [90,120,50,140]

mse = mean_squared_error(actual, predicted)

mae = mean_absolute_error(actual, predicted)

Why use MSE instead of MAE?

The main difference between MSE and MAE is how they deal with outliers. This difference effects which projects you should use them for, so let’s lay out the two situations where you should be using MSE instead of MAE:

- When your project requires that you avoid occasional large errors as much as possible

- When you don’t need to communicate your error metrics to end users. This is because MSE is difficult to understand and interpret

Which is better, MAE or MSE?

MAE and MSE are both good all-round metrics, but they each have their strengths and weaknesses. Ultimately, which is better depends on your project goal. If you want to train a model which focuses on reducing large outlier errors then MSE is the better choice, whereas if this isn’t important and you would prefer greater interpretability then MAE would be better.

Related articles

Interpretation of MSE values

Interpretation of MAE values

Regression metric comparisons

RMSE vs MAE

RMSE vs MSE

MAE vs MAPE