RMSE vs MAE, which is the best regression metric?

RMSE and MAE are both metrics for measuring the performance of regression machine learning models, but what’s the difference? In this post, I will explain what these metrics are, their differences, and help you decide which is best for your project.

RMSE and MAE are both metrics for measuring the performance of regression machine learning models, but what’s the difference? In this post, I will explain what these metrics are, their differences, and help you decide which is best for your project.

RMSE vs MAE, what are they?

Let’s start by defining what these two metrics are.

What is MAE?

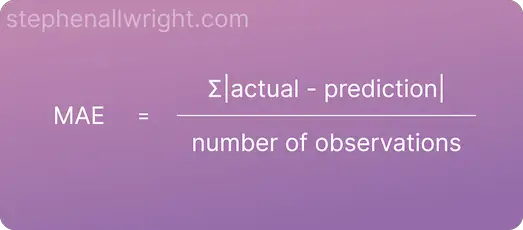

Mean Absolute Error (MAE) is the average absolute error between actual and predicted values.

Absolute error, also known as L1 loss, is a row-level error calculation where the non-negative difference between the prediction and the actual is calculated. MAE is the aggregated mean of these errors, which helps us understand the model performance over the whole dataset.

MAE is a popular metric to use as the error value is easily interpreted. This is because the value is on the same scale as the target you are predicting for.

The formula for calculating MAE is:

What is RMSE?

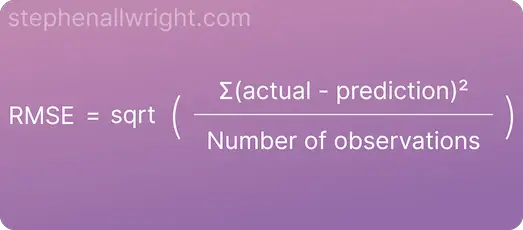

Root Mean Squared Error (RMSE) is the square root of the mean squared error between the predicted and actual values.

Squared error, also known as L2 loss, is a row-level error calculation where the difference between the prediction and the actual is squared. RMSE is the aggregated mean and subsequent square root of these errors, which helps us understand the model performance over the whole dataset.

A benefit of using RMSE is that the metric it produces is in terms of the unit being predicted. For example, using RMSE in a house price prediction model would give the error in terms of house price, which can help end users easily understand model performance.

The formula for calculating RMSE is:

What are the similarities between MAE and RMSE?

Aside from the fact that they both are error metrics for regression models, the other similarities are:

- Error is given in terms of the value you are predicting for

- The lower the value the more accurate the model is

- The resulting values can be between 0 and infinity

What is the difference between RMSE and MAE?

Whilst they both have the same goal of measuring regression model error, there are some key differences that you should be aware of:

- RMSE is more sensitive to outliers

- RMSE penalises large errors more than MAE due to the fact that errors are squared initially

- MAE returns values that are more interpretable as it is simply the average of absolute error

Example of RMSE and MAE with outliers in the dataset

The key difference between the two metrics is how they behave when outliers are present in the dataset. Let’s look at this behaviour with an example where we are predicting house prices.

| Actual | Prediction | Absolute Error | Squared Error |

|---|---|---|---|

| 100,000 | 250,000 | 150,000 | 22,500,000,000 |

| 200,000 | 210,000 | 10,000 | 100,000,000 |

| 150,000 | 155,000 | 5,000 | 25,000,000 |

| 180,000 | 178,000 | 2,000 | 4,000,000 |

| 120,000 | 121,000 | 1,000 | 1,000,000 |

MAE = (150,000 + 10,000 + 5,000 + 2,000 + 1,000) / 5 = 33,600

RMSE = sqrt[(22,500,000,000 + 100,000,000 + 25,000,000 + 4,000,000 + 1,000,000) / 5] = 67,276

Both metrics are returning the error on the same scale as the house prices we are predicting, but the RMSE is higher as there are outliers in the dataset which increase the error.

How to interpret RMSE and MAE

MAE is interpreted as the average error when making a prediction with the model. RMSE on the other hand can be interpreted as the average weighted performance of the model, where a larger weight is added to outlier predictions.

When to use RMSE or MAE

We've looked at the similarities and differences between RMSE and MAE, so when should you use one or the other?

It comes down to the degree to which you want to penalise large errors. If your use case demands that occasional large mistakes in your predictions need to be avoided then use RMSE, however, if you want an error metric that treats all errors equally and returns a more interpretable value then use MAE.

Is RMSE better than MAE?

The metric which is best depends upon your use case and dataset. However, RMSE is usually the preferred metric over MAE for measuring model performance. This is because developers often want to reduce the occurrence of large outliers in their predictions and MAE can be seen as too simplistic for understanding overall model performance.

Related articles

Regression metrics

What is a good MSE value?

What is a good R-Squared value?

What is a good MAPE score?

What is MDAPE?

How to interpret RMSE

How to interpret MAE

Metric comparisons

RMSE vs MSE

MAE vs MAPE

MSE vs MAE

RMSE vs MAPE

Metric calculators

References

MAE scikit-learn documentation

RMSE scikit-learn documentation